Entrepreneur's Toolkit: Tools and Technology for your Startup - Part 1

/When first embarking on your startup journey, there is a seemingly never ending list of things to do, and everything on that list is demanding your time and attention.

In fact, before you even formally start your company by creating a C-Corp in Delaware (or whatever type of entity you're forming, and wherever you're locating it), there are a lot of things you should be doing, for example:

Refining your vision

Validating your vision and idea with potential customers

Building prototypes and starting work on your minimal viable product (MVP)

Selling your product to customers - if you've got customers who are willing to pre-pay for your product, knowing that it won't be available for X months, that’s a great sign that you're solving a real pain point

Building your team - or at least talking to people who you may want to be part of your team as things progress

During this process, you're going to need to start using various different pieces of tools and technology to collaborate with people, manage the development of your MVP and communicate with customers.

Once you formally start your company, you now need to deal with things like accounting, tax filing, payroll and a whole bunch more.

To help manage all of this, you're going to need tools and technology. There are a myriad of choices of SaaS based tools and technology out there. It's time consuming and overwhelming to try and sift through them all and figure out which ones you want to try, then which ones to adopt.

I've spent countless hours over the years researching, trying out, and then actively using different pieces of software across various startups. To help give you a head start, here's my list of tools that you may want to check out.

Since there is a lot to cover here, I've broken this post up into 4 parts:

Part 1 (this part): productivity, chat and collaboration, audio video conferencing and file sharing

Part 2: password management, electronic signatures, accounting, business phone number and project management

Part 3: customer relationship management and support, email marketing, website and building your own database

payment processing and a couple of other bits and pieces.

Part 4: HR and payroll, payment processing on your website and in person, payment vendors in the US and internationally

Productivity Suite (email, word processing etc…)

Selecting a productivity suite to give you customized email (eg @yourocmpany.com), word processing, spreadsheets, presentations etc… is going to be one of the first things you need to do. It will also drive the other choices you're going to make. Fortunately, there are really only 2 options you need to worry about.

Microsoft Office 365

Microsoft's offering in the productivity space is called Office 365. Depending on which package you choose, it includes hosted company-branded email via Exchange along with desktop and online versions of Outlook for email, Word, Excel, PowerPoint etc… On their website, you'll find 3 different plans for personal, 3 for small business and at least 4 for enterprise. It's confusing, but here's the summary: for a startup, you'll be looking at the "Business" section:

Plans start at $5/user/mo, but you'll probably want the $12.50/user/mo Business Premium version - this gets you cloud hosted company email, all the Office apps (online and desktop versions) along with a bunch of other stuff we'll discuss below.

G Suite

G Suite is Google's competitor to Microsoft Office 365. It's a very capable offering and many companies use it. However, all of Google's apps are browser based. I prefer the richness of a desktop app along with its offline capabilities (I spend a lot of time on a plane). Google's Docs, Sheets, Slides offerings are good, but they're not as good as Microsoft Word, Excel and PowerPoint respectively. Google's pricing packages are a lot simpler:

Starts at $6/user/mo for the Basic version, but you'll probably want the $12/user/mo Business version.

My Recommendation: Office 365

I prefer the desktop versions of Word, Excel PowerPoint to their online equivalents or Google’s offerings, so that puts me squarely in the Office 365 camp. But to be honest, either of these options are good products. Choose whatever you're most comfortable with.

Chat and Collaboration

In many ways, your decision regarding a productivity suite will influence this decision quite heavily.

Slack

Slack is a tool for team collaboration and instant messenger-style chat between team members. Slack rose to popularity in recent years sparking competition from Microsoft and Google (see below). Slack would claim you can replace email by using it. Personally, I don’t really buy that - no matter what you do internally, you'll need to communicate externally with customers and partners using whatever they use - which is email. I also think email is a better tool for longer form communication. Instant messaging is useful for short messages and quick responses. There is a free version, but if you need more features you'll be starting at $6.67/user/mo.

Microsoft Teams

Teams is Microsoft's Slack competitor. Deep ties into the Office 365 ecosystem and already included for free in various Office 365 plans.

Google Hangouts Chat

Hangouts Chat is Google's Slack competitor. As you would expect, it's got deep ties into the G Suite ecosystem and already included in G Suite plans.

Skype

If you're looking to save money, Skype can also be used for instant messenger-style chat. It's more geared towards personal communication, so not optimized for business chat. It's ok for very small teams, and it is totally free, so there's that.

My Recommendation: Free version of Slack then Teams/Hangouts

Slack is a very popular choice, and the free version is great for small teams. However, when you start to exceed the limits of the free tier and need to move to the paid tier, it's tough to justify $6.67/user/mo if you're already paying ~$12/user/mo for your productivity suite that includes equivalent . You'd be increasing your per user cost by 50% per month. Slack is arguably ahead of its competitors at the time of this writing, but Microsoft and Google are pouring probably hundreds of millions of dollars into catching up. Is Slack worth the extra money? Probably not.

After running into the limits of the free version of Slack, I'd move to whatever your productivity suite includes, namely Teams or Hangouts.

Audio and Video Conferencing - Internally Within Your Team

You'll need tools to do audio and video conferencing within your team.

Slack

Slack has audio and video conference ability built in, although I've found the features and quality don't match up with the below offerings. I'm sure it will improve over time.

Teams

Teams has this capability built in. In the early days, it was impossible to invite people outside your organization, for example, contractors without a @yourcompany.com email address, to join a meeting. That was rather problematic. They've fixed that now, and Teams is a good choice for meetings within the team (meaning employees and contractors). It's also included in various versions of Office 365.

Google Hangouts Meet

Hangouts Meet is G Suites audio and video conferencing offering. Included in your G Suite subscription.

Skype

If you're penny pinching, and working with a small team, you can use Skype totally for free. You will need to have each team member add the other team members into their contact lists to easily initiate calls, and everyone needs a Skype account.

My Recommendation: Teams or Hangouts

Go with whatever your productivity suite offers here.

Audio and Video Conferencing - Externally with Customers and Partners

Besides internal communication, you're going to need to communicate with external customers and partners. While you can technically use any of the above "internal" tools for this, they're not (yet) a great solution to the problem.

Zoom

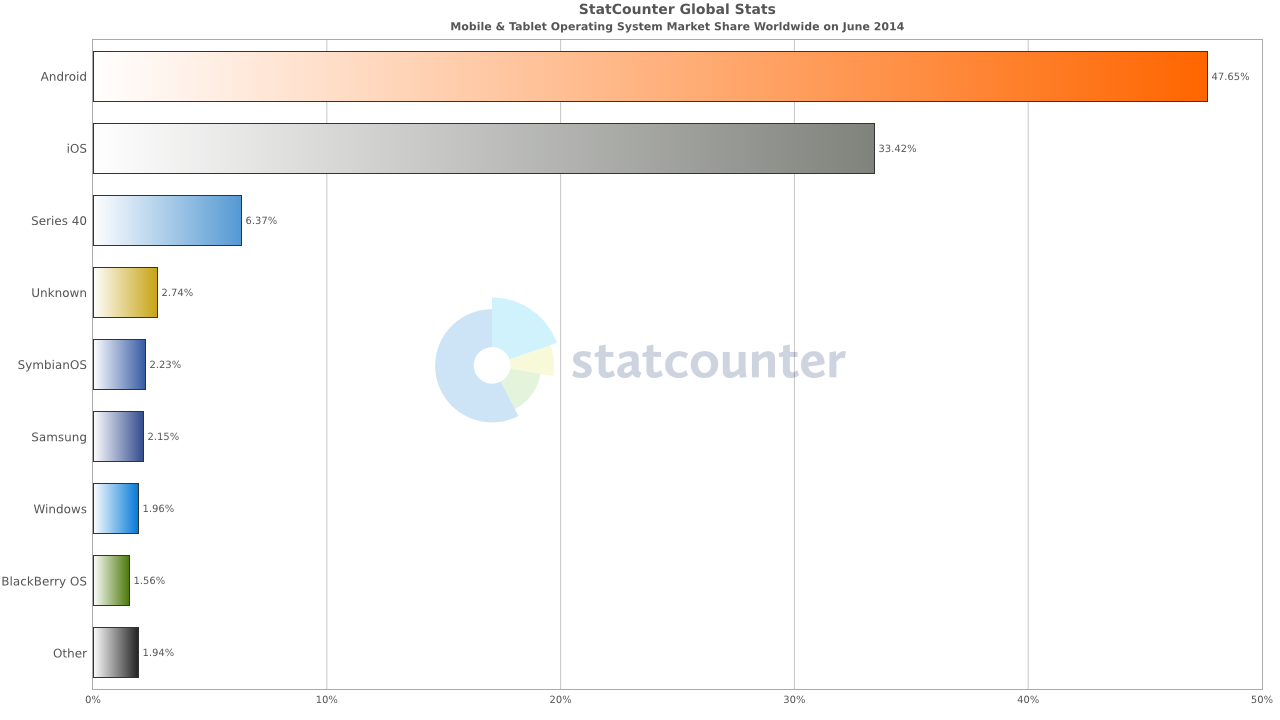

Zoom has risen to be one of the dominant video conferencing platforms in recent years. It's super simple and easy to use, and importantly, doesn't require your participants to jump through a lot of hoops to get into a meeting. Participants will need to install an app to access the meeting, but that’s free and easy to do for their PC, Mac, iOS or Android device. There is a free version limited to 40 minute meetings, so you'll probably want a paid version starting at $15/mo. You don’t want to be cut off at 40 minutes during an important customer presentation :/

My Recommendation: Zoom

For external meetings, you can't beat the simplicity of Zoom. It's just a better user experience compared to Hangouts Meet, Teams or Skype. Hangouts Meet is pretty good, followed by Teams then Skype for this purpose. See if you like the experience included with your productivity suite, but if not, go with Zoom.

File Sharing

This is another area heavily influenced by your choice of productivity suite.

Dropbox

Dropbox was one of the first companies to simplify file sharing and make it really easy for individuals. They started with a personal product so you could access your files from any device, and they've since expanded their offering to include features for business, both large and small. Microsoft and Google, along with a ton of other companies, also compete with them. For a team version of Dropbox, you'll be looking at $12.50/user/mo with a minimum of 3 people, so at least $37.50/mo.

OneDrive for Business

OneDrive for Business is Microsoft's offering. It’s biggest selling point is that it's included for free with various Office 365 plans. However, it's actually based on SharePoint, and while SharePoint is a good product for enterprises (it has lots of enterprisey features), it's clunky. Microsoft has tried to put a nicer interface on top of SharePoint to make it look more like the consumer version of OneDrive (which is actually really good), but they are actually totally different products.

Google Drive

G Suite's file storage solution is called Drive. Similar in concept to One Drive for Business, included with G Suite.

OneDrive Personal

If you're penny pinching, you can use Microsoft's OneDrive Personal product and setup shared folders to share files with your team. OneDrive Personal is simple and easy to use, but you'll be missing out on a lot management features you'll want as your team grows.

My Recommendation: Dropbox if you want to spend the money, OneDrive for Business / Google Drive otherwise

If you are willing to spend the money, I think Dropbox has the best product in this space. But at $12.50/user/mo with a minimum of 3 users, it's pretty spendy - you're basically doubling the cost of your core productivity suite.

Is it really worth the extra? Similar to chat and collaboration with Slack, you'll need to determine if it's that much better that you are willing to spend more money on it. If you are happy with whatever is included with your productivity suite, ie OneDrive for Business or Google Drive, go for that instead.

Stay tuned for parts 2 and 3 for more recommendations on tools and technology you'll need for your startup.